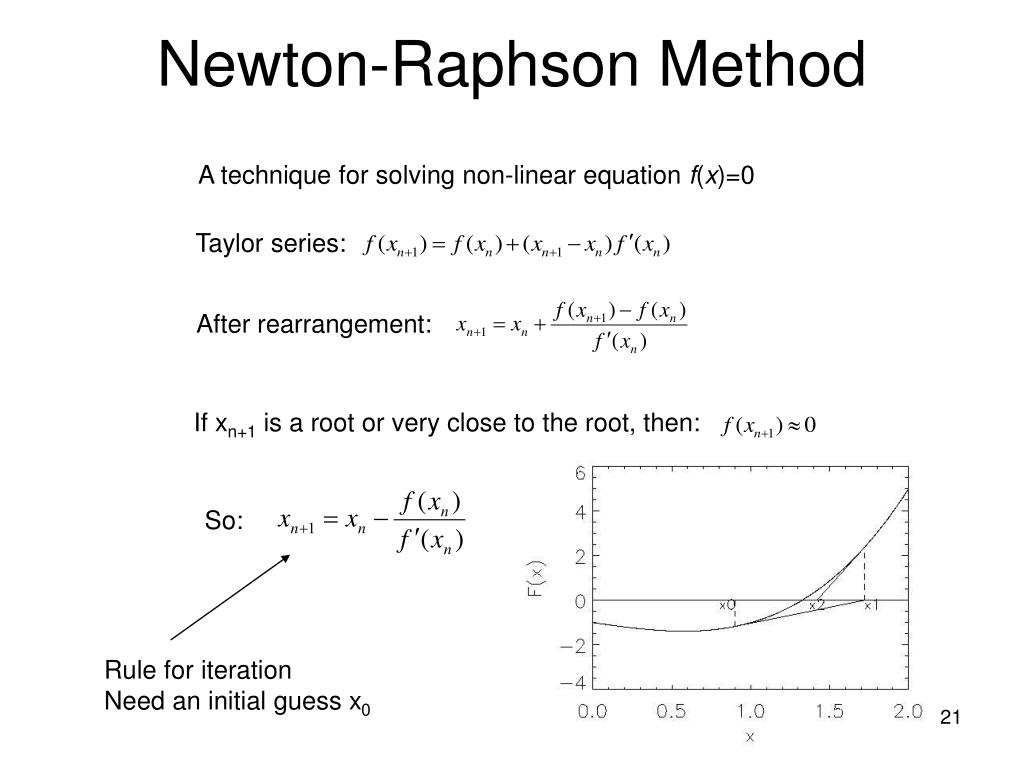

Weights = np.random.random((n_y, n_hPrev))*scaler Weights = np.random.randn(n_h, n_hPrev)*scaler Return newtonMethod(X, Y, weights, learningRate, numLayers, max_iter, plotN)ĭef initializeWeights(n_x, n_y, layerSizes, scaler = 0.1, seed = None): Weights = initializeWeights(n_x, n_y, layerSizes) Raise ValueError("Invalid vector sizes for X and Y -> X size = " + str(X.shape) + " while Y size = " + str(Y.shape) + ".") # Almost pure Newton-Raphson method implementation for a neural networkĭef model(X, Y, layerSizes, learningRate, max_iter = 100, plotN = 100): įor a better understanding of the Newton-Raphson method, these wikipedia articles are good enough: Another major help I needed is on how to make the code more efficient - the computational cost for creating the hessian matrices is really high as it is, I wish there was a faster way of doing it.Īn observation on the hessian: to guarantee that the update direction for the weights and biases is a descent direction, I used aprox_pos_def() function to approximate the hessian with a positive definite matrix you can see the theory behind this transformation here. Any kind of feedback would be appreciated, but mostly I'd like to know if all of the gradients and hessians were computed correctly - for the examples I ran, it seems to be working correctly, leading me to believe everything is ok. I programmed a Neural Network to do binary classification in python, and during the backpropagation step I used Newton-Raphson's method for optimization.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed